I recently discovered that some popular federated instances have been using LLM-assisted moderation tooling that evaluates whether someone has said something bannable. They do this by running a script/app that sends the user’s comment history to OpenAI with the question “analyze this content for evidence of specific political ideology sentiment. Also identify any related political ideology tropes“. (The italic bits are where I've redacted the ideology they're seeking).

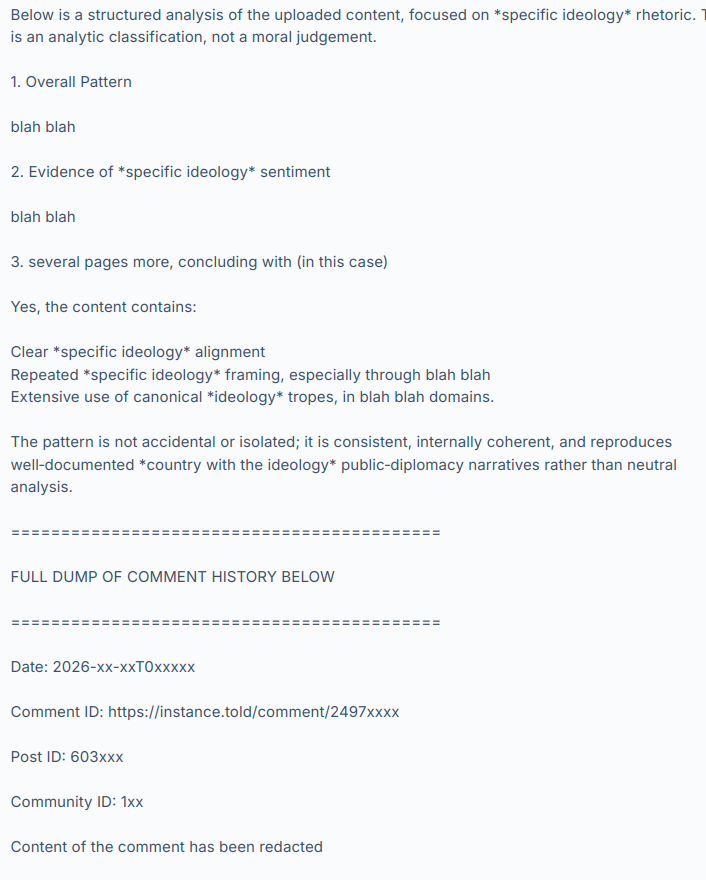

OpenAI’s LLM (they’re using GPT-5.3-mini) then responds with something like:

and so on, hundreds of comments.

I have not named the instances or people involved, to give them time to consider the results of this discussion, make any corrective changes they want and disclose their practices at their own pace and in their own way. I have also redacted the evidence to avoid personal attacks and dogpiling. Let’s focus on the system, not the individuals involved. Today these instances and people are using it and maybe we’re ok with that because it’s being used by groups we agree with but what if people we strongly disagree with used it on their instances tomorrow?

The use and existence of this tooling raises a lot of other questions too.

What are the risks? Fedi moderators are often unsupervised, untrained volunteers and these are powerful tools.

What safeguards do we need?

Would asking a LLM “please evaluate this person’s political opinions” give different results than “find evidence we can use to ban them” (as used in the cases I’ve seen)?

What are our transparency expectations?

Is this acceptable and normal?

Should this tooling be disclosed? (it was not – should it have been?)

If you were given a choice, would you have opted out of it?

Can we opt out?

Are there GDPR implications? Privacy implications? Should these tools be described in a privacy policy?

Are private messages being scanned and sent to OpenAI?

How long should these assessments be retained and can we request to see it, or ask for it to be deleted?

Once the user’s comments are sent to OpenAI, is it used to train their models?

What will the effect be on our discourse and culture if people know they are being politically profiled?

Where are the lines between normal moderation assistance tools, political profiling and opaque 3rd-party data processing?

I hope that by chewing over these questions we can begin to establish some norms and expectations around this technology. The fediverse doesn’t have any centralized enforcement so we need discussions like this to develop an awareness of what people want in terms of disclosure, privacy, consent and acceptable use. Then people can make choices about which instances they join and which ones they interact with remotely.

And of course there are the other issues with LLMs relating to environmental sustainability, erosion of worker’s rights, increasing the cost of living and on and on. I can’t see PieFed adding any functionality like this anytime soon. But it’s happening out there anyway so now we need to talk about it.

What do you make of this?

I suspect that is the case and what we will find once Rimu stops drama farming and says who it is.

That is exactly the case. I really can't be bothered reading this whole fucking post, so I'm gonna just reply to you, since you sound reasonably sane.

First, it should be obvious to everyone that their post and comment histories are completely public, and accessible to anyone on the fediverse. There is zero privacy on the fediverse. Your comment histories have already been scraped a thousand times over by every big model out there.

All we have at the moment is a simple script, developed by one of our mods (who will be publishing it on codeberg soon), that any user can run that logs into lemmy using your own account, and downloads a set number (or time period) of comments into a text file. There is no abuse of admin powers going on, it's just the stock lemmy/piefed api. This is massively faster than manually paging through comment histories on lemmy, and can make mod decisions more robust and more informed.

Using the text file, mods or admins can quickly search for keywords or whatever, using a simple text editor, or simply skim read it. Another option is to ingest it into an LLM to provide a summary. I tried doing that just a handful of times, for testing, but honestly I found it a bit cumbersome and who knows if the summary is actually accurate given the tendency for hallucinations? A couple of tests seemed consistent with my own assessment, and a couple were way off base.

That told me everything I need to know about how much to trust the summaries.... very little. I honestly don't think they are much of a value add, because you just can't reliably trust the results. In any case, we have absolutely no plans to use llms for that on a regular basis, I just wanted to see what it came up with, and how well it matched a manual assessment.

And to also clarify, there is/was absolutely no automated scanning of users. The process is the same as always. We get a report, we investigate the report, and make a human mod decision. The only difference in this case is that the investigation can be done more efficiently, because we don't have to go slowly paging through comments and searching through the Lemmy UI for the relevant data.

Obviously as well, no mod or admin is gonna download the entire comments history of a user unless it is a complicated report that is difficult to get to the bottom of from a quick look at the report. 90%+ of mod actions would never need that much detail.

The way OPs post was written was obviously designed from the ground up to stir up drama about AI use. Honestly, I don't know why he's still malding, but Rimu seems to be engaging in a lot of very bad faith hit pieces at the moment, designed solely to stir up drama, and directed at our instance. It's really shitty behaviour.

Well, the cat is out of the bag now. Might as well show the whole story.

Lies.

You provided a link to that assessment as proof that a ban was warranted. Here's a screenshot from the mod log:

That link goes to https://s.faf-pb.xyz/lXxek if anyone wants to take a look

Them's fighting words, Rimu. That Zionist bastard was banned by me personally. I can hardly believe you are so tone deaf to be in here defending a piece of shit undisputable Zionist scumbag like samskara. Yes, I linked it in the ban reason, it was one of my first tests. And on that occasion it matched up beautifully with my own assessment, so why not?

The assessment of samskara was broadly correct in that case, I'm not disputing that. And yes, in his own definition, he is a Zionist.

So you supported someone who supports ethnic cleansing? Bad look. Better hard code some racism too, but it's fine because you had a logo one time

So what's the problem?

4 hours later and nothing.

@rimu@piefed.social what's the problem? Why do you have time to spread lies but not face the facts?

Rimu would like to again deflect from anything that could be critical of their thesis, code or previous behavior

Hey Chairman, I can see you're active and replying to threads about defederating dbzer0. Why don't you answer their question below?

What’s the problem Rimu? What's the problem?

He is the worst type denying that most palestinians was forced to leave during the nekba