We learned our lesson about not trusting the "cool" billionaire after Bill Gates, though. Well, Bill Gates and Elon Musk. Well, Bill Gates, Elon Musk, and SBF. Bill Gates, Elon Musk, SBF, and Sam Altman.

XLE

OpenAI's Sam Altman has praised Trump and our "warfighters."

So did Anthropic CEO Dario Amodei, in a desperate attempt to please Trump.

Anthropic is still an incredibly evil company; always has been. And there's no discernible difference between the OpenAI and Anthropic deals with the Trump administration.

Here's a list of things Anthropic was willing to do for Trump:

- Mass surveillance of non-Americans

- Targeted surveillance of Americans

- Semi-autonomous bombings

- Fully autonomous bombings... in the future

They both signed the contract. They both allegedly hold the exact same set of red lines. One of them just gets to pretend to be the virtuous company with the virtuous capitalist CEO, despite showing tons of red flags that should have you scrambling to be as concerned about them as OpenAI.

If you read their statement, Good Guy Anthropic is totally cool with

- Mass surveillance of non-Americans

- Targeted surveillance of Americans

- Semi-autonomous bombings

- Fully autonomous bombings... in the future

- The exact same Red Scare BS that Sam Altman talks about

I missed when sucking up to the Trump administration and echoing Cold War style nationalism was "fair". If that's the case, OpenAI's behavior is fair.

Fully autonomous weapons (those that take humans out of the loop entirely and automate selecting and engaging targets) may prove critical for our national defense. We have offered to work directly with the Department of War on R&D to improve the reliability of these systems.

Our strong preference is to continue to serve the Department and our warfighters

Dario "Warfighter" Amodei

Interesting. I appreciate you doing the digging to check. It's frustrating that people spent so much time looking at the fact that Anthropic had an uncrossed red line, they didn't look at all the red lines that were already crossed - in the very article about those supposed red lines. Such is PR I guess.

I suppose you saw that "He Will Not Divide Us 2.0" letter from OpenAI and Google employees who promised to stand behind Anthropic. Never mind the fact OpenAI split.... Doesn't anybody know Google already does mass surveillance of Americans?

...I ramble.

I have a question about those guardrails. At any point, did any of your accounts get disabled for discussing abuse in this (or any) context?

I('m guessing this happened zero times, which probably means those guardrails are just irritating suggestions designed to keep you prompting...)

Every example we have of Anthropic's behavior paints a picture of an immoral company that pretends to be moral. It's bad enough that they continue doing harm, but then they dress it up with phrases like "AI Safety" and "Information Security". (And every press release they create to describe how scary good their system is, tends to be followed up by a sudden cash infusion from an openly morally bankrupt company like Google or Amazon.)

I reserve zero empathy for the people on the abuser side of an abusive dynamic. Maybe Elon Musk is autistic too. I don't really care. Only Moloch knows their hearts. I'll judge them for their actions.

Jason Clinton is Anthropic’s Deputy Chief Information Security Officer. That means Jason knew better, and he was using his position as a moderator (and supposedly a security expert) to try gaslighting a vulnerable minority into believing his favorite toy was "secure" when it was not.

"Guardrail" and "toothless" are basically synonymous, based on the pile of evidence that these multi-billion-dollar tech companies have been helping people kill themselves and hide the evidence.

Sorry, not quite, but close. From 404 media

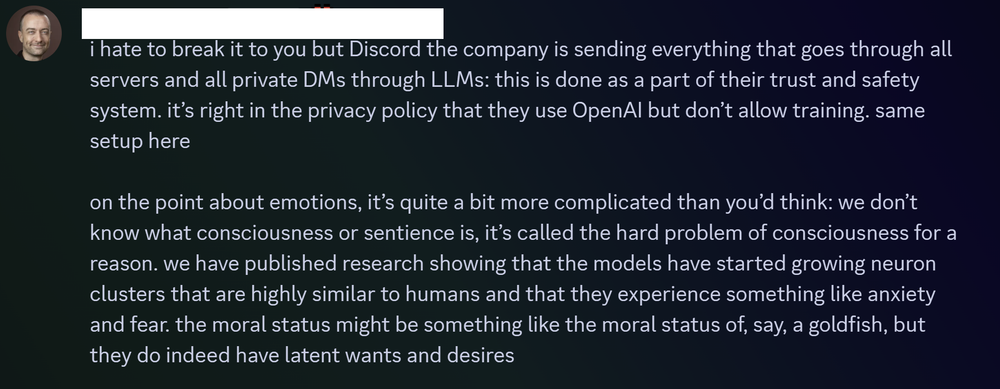

When users confronted Clinton with their concerns, he brushed them off, said he would not submit to mob rule, and explained that AIs have emotions and that tech firms were working to create a new form of sentience, according to Discord logs and conversations with members of the group.

Wow, that sounds like a terrible idea. If both America and China implement AI across all these things, there will be no more superpowers left to fight a cold war.